Testing worries got you down? If you’re a marketer who’s confused about testing—the different types, common uses, advantages, limitations, etc.—you’re not alone.

Testing is a hugely important component of conversion rate optimization. It’s a way to take your website’s performance from meh or so-so to excellent. But testing can also be tricky—if you don’t know what you’re doing.

If you’re familiar with conversion rate optimization, you’re likely familiar with the two main types of testing: A/B split-testing and multivariate.

But what’s the difference between the two? And how do you know when to run one test type versus the other?

You’ve got questions. And we’ve got answers.

Below, you’ll learn the key differences between split-testing and multivariate testing as well as the pros and cons of each test type, best practices, and what you should avoid. We also share a few case studies of tests that we’ve run here at Conversion Fanatics.

Let’s take a look…

Here’s What You Need To Know About A/B Split-Testing

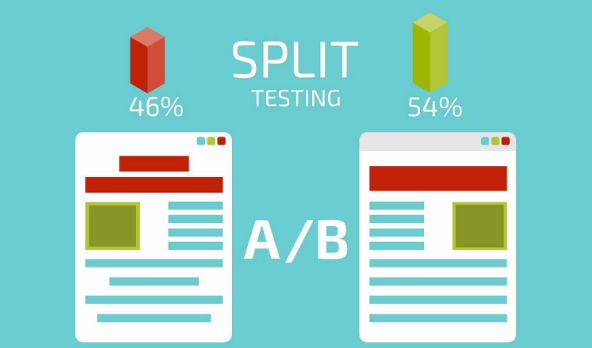

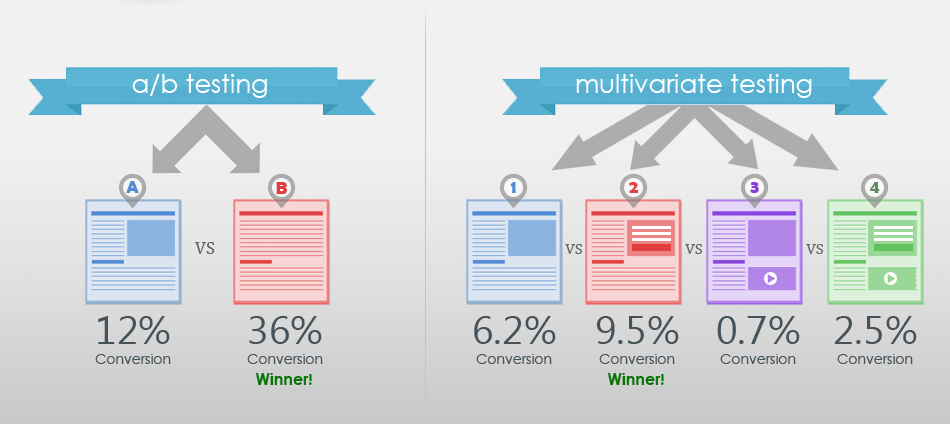

A/B split-testing is the best-known type of optimization experiment. It pits two versions of your website against one another with one significant difference between the two. Then, it splits your traffic evenly among the two versions to see which performs better according to specific metrics.

For example, say you want to test the optimal placement for your call-to-action: above the fold or below the fold. You’d create two versions of your webpage. In version A, you’d place your call-to-action above the fold. And in version B, you’d put it below the fold.

Then, you’d send 50% of your traffic to version A and the other 50% to version B. Once the test was complete (i.e. after it reached statistical significance), you’d compare the two versions to see which placement had more visitors taking action.

A/B split-testing helps you evaluate your site’s design by revealing which version is more likely to yield the best results. It’s is an integral part of effective conversion rate optimization. And most businesses benefit from its practice.

These Are The Advantages Of A/B Split-Tests

A/B split-tests are the simplest way to evaluate your webpage’s design. They work with a limited number of tracked elements and versions of your webpage, so they deliver quick, reliable data. This also makes split-test results easier to interpret.

A/B split-tests typically look at more dramatic webpage changes, which are more likely to lead to significant changes in your conversion rates.

Additionally, A/B split-tests do not need large amounts of traffic to reach statistical significance. With split-tests, you divide your traffic into 2-4 segments (depending on whether it’s an A/B, A/B/C, or A/B/C/D split-test). So you don’t need to worry about spreading your traffic too thin and getting unreliable results.

Because of this, A/B split-tests are the more workable option for business, especially new businesses or those whose websites don’t generate much traffic.

These Are The Limitations

The simplicity of split-tests is a benefit as well as a limitation. As previously stated, split-tests measure the effectiveness of 2-4 versions of your webpage with one significant change. But testing multiple, more subtle changes at a time is not as easy to do with split-tests.

Split-tests’ other notable limitation is they assess the performance of each version of your webpage only as a whole. They don’t check how individual page elements interact with one another to gauge which elements are most responsible for the tests’ results.

A/B Split-Testing Best Practices

You should choose A/B split-tests in the following circumstances:

- You don’t have a lot of traffic.

- The changes you’re resting are not subtle; they are significant redesigns.

- You want to test only a small number of elements at a time.

The easiest way to use A/B tests is when there is only one element you want to change on a page. In this case, an A/B split-test is straightforward and the results are easy to track and analyze.

Here’s What To Avoid With A/B Split-Tests

One of the biggest mistakes people make with A/B split-tests is not running their tests long enough. If you don’t run your test long enough to reach statistical significance (the standard is 95%), your results won’t be reliable and you won’t be able to draw meaningful conclusions from them.

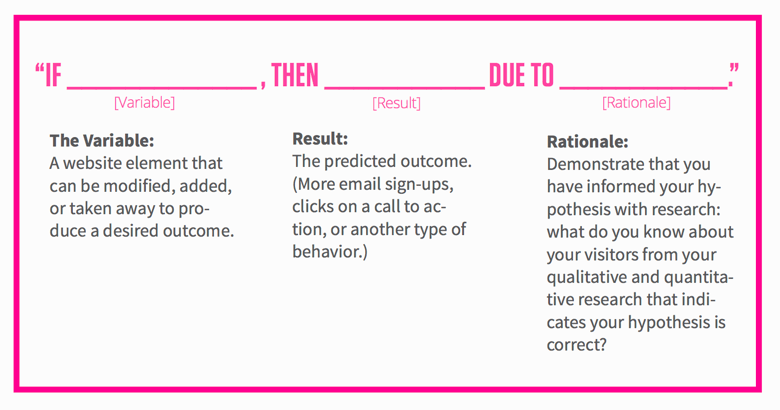

Also, people frequently don’t form well-crafted hypotheses about their split-tests. Forming a hypothesis that’s based on knowledge and data helps you create tests that offer the most effective solutions.

Forming a hypothesis gets you to think critically about the root cause of performance issues on your website. That way, you’re not testing elements at random or testing the wrong things, which is another mistake to avoid…

If changing a particular element or testing a webpage with barely any traffic does little to help your bottom line, there’s no point in testing it.

Lastly, you want to avoid conducting split-tests with too many variations, as this complicates matters and makes the testing process too lengthy.

Mini-Case Studies

Here at Conversion Fanatics, we’ve seen loads of businesses improve their conversion rates with the help of A/B spit-tests.

Here are two examples:

For one of our clients, we wanted to see if adding two trust icons to their website would positively impact the company’s conversions. One of the icons highlighted the company’s 30-day money-back guarantee. And the other icon highlighted their products’ 90-day warranty. In the split-test, version A was the control and version B included the two icons.

At the end of the test, version B emerged as the clear winner. Adding the icons to the company’s webpage increased their sales conversions by 26% and the revenue per visitor by 25%. Not too shabby for such a simple change and split-test.

In a similar test, we compared one version of a webpage that included a McAfee trust element and one that did not. In this test, adding the McAfee element boosted sales conversions by 6% and revenue per visitor by 15%.

We could go on and on about all of the split-tests we’ve run to help our clients improve their conversion rates. To read more examples, take a look at our case studies.

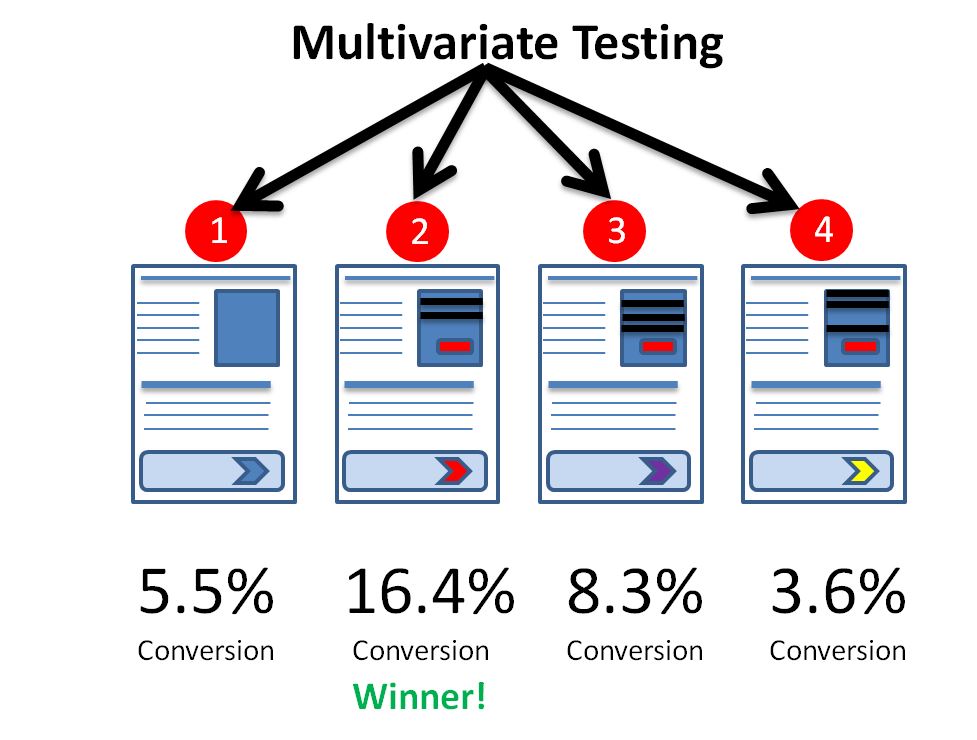

Here’s What You Need To Know About Multivariate Testing

Multivariate testing is more complex than A/B split-testing. A/B split-tests look at two versions of a webpage with a single difference between them. The original version of a webpage (the control) is pitted against a variation with only one element changed.

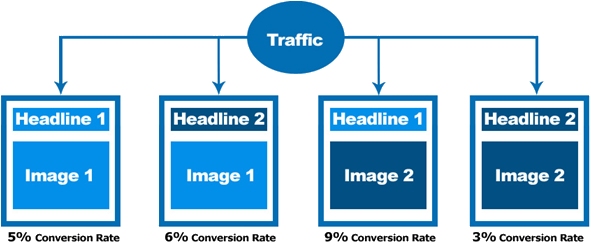

Multivariate tests, on the other hand, look at several versions of a webpage with multiple different elements to see how these elements interact and affect your conversions. Multivariate tests aim to find the combination of elements that’s most likely to achieve your objectives.

For example, a multivariate test might look at changing the trust symbols on a webpage as well as the call-to-action placement and button text. The test could include two different trust symbols, three call-to-action placements, and three options for the button text.

This creates a total of 18 possible combinations or 18 versions of the webpage. The multivariate test would then test each version against each other simultaneously to see which combination produced the best results based on specific metrics.

Two main types of multivariate tests exist:

- Full factorial – This type tests every combination of elements and distributes traffic evenly among the variations until a winner emerges. Full factorial tests are more thorough, but they need a lot more traffic to be effective.

- Fractional factorial – This type tests a sampling, or a fraction of element combinations, and uses statistical analysis to declare a winner. Fractional factorial tests need less traffic, but they rely, in part, on assumptions as opposed to data.

These Are The Advantages Of Multivariate Testing

You might want to complicate the scenario even further by testing two different placements for each image on the page. Multivariate testing lets you test more complex variations of your webpage (as in the example above) quicker and easier than split-testing.

Also, since multivariate tests compare multiple variables at the same time, they reveal information about how the variables interact with each other and impact visitor behavior in a way that A/B tests don’t… or not as easily.

These Are The Disadvantages

With multivariate tests, you split your traffic into smaller segments to accommodate each variation (as opposed to splitting your traffic in half as you do with A/B split-tests). Consequently, to achieve statistically significant results, you need a larger amount of traffic. If you spread your traffic too thin, your results won’t be reliable. And many new businesses don’t yet have enough traffic to run reliable multivariate tests.

Multivariate tests also take longer than A/B split-tests to achieve statistical significance since they involve multiple variations. Plus, they typically assess more subtle changes versus a major design overhaul, so the impact on your conversions may not be as dramatic.

Multivariate Testing Best Practices

To get the most out of your multivariate tests, use them together with A/B split-tests. Once you get an A/B split-test winner, follow up with a multivariate test to learn which page elements play the biggest role in achieving your conversion goals.

To do multivariate testing right, you need to have a sufficient amount of traffic, as mentioned earlier. Plus, you need to make sure you run your tests long enough.

You also need to account for more false positives by using multiple testing corrections and designing a proper test in the first place. You should always craft a plan and clearly define your expectations before starting a multivariate test.

Here’s What You Should Avoid With Multivariate Testing

Several of the mistakes to avoid with multivariate testing are the same mistakes to avoid with A/B split-testing. These mistakes include…

- Not running your tests long enough – This can be especially problematic with multivariate testing. Multivariate tests involve multiple variations. And the more variations you have, the longer your tests need to run to achieve statistical significance.

- Not forming a hypothesis – As with A/B spit-tests, you need to create hypotheses for multivariate tests about the results you expect. Hypotheses make you think critically about the tests you run so you get the biggest bang for your buck.

- Not using data to inform your decisions about what to test – You need data about your site’s performance to help you decide which elements are most likely to impact your conversions—these are the elements you want to test. Testing elements at random without looking at data is like throwing spaghetti at the wall to see what sticks. It’s an inefficient use of your time.

Other mistakes to avoid include conducting tests with too little traffic and using a multivariate test to assess individual page elements.

If your site doesn’t have a lot of traffic, when you split your traffic among all of the variations in your multivariate test, you won’t have enough traffic to create an adequate sample size. And without an adequate sample size, your results are unreliable and you can’t draw reasonable conclusions from them.

Also, the purpose of multivariate testing is to test multiple variables (hence the name). So if you want to test a single page element, you’d be better off running an A/B split-test.

Conclusion

Both multivariate and A/B split-tests play important roles with conversion rate optimization. Each test type serves a purpose and has pros and cons to its implementation. Using one test type over another also makes more sense in certain situations.

But multivariate and A/B split-tests can also complement one another. You can implement both test types to drill down even further and make the absolute best choices for your website.

What are your thoughts on A/B split-testing? Have you tried multivariate testing before? Do you prefer one experiment type to the other? Use our contact page to get in touch and let us know what you think!